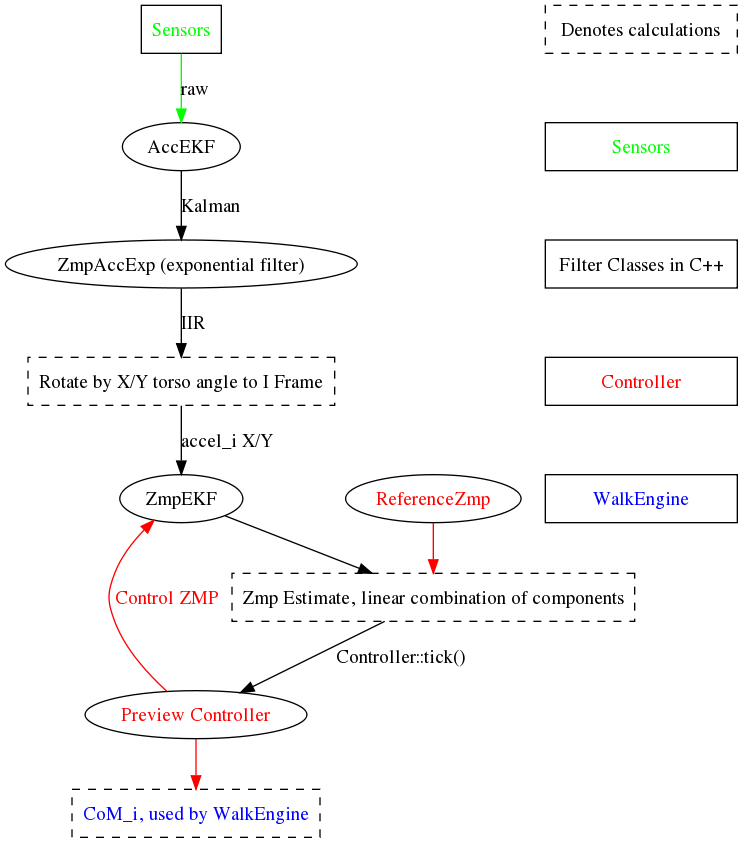

In preparation for Istanbul, we’ve begun overhauling the sensor filtering pipeline of our motion engine. Currently (see the graph) things are pretty insane, with three layers of filtering before any data makes it into the control algorithms. With any luck, we’ll be able to tune the early stages of filtering to reduce any unnecessary delay.

Category Archives: Motion

Nao takes his first steps!!!

ERS220 Race in CS320

Here’s a video I took a couple months back from the first CS320 lab: getting a robot to walk. What was really fascinating was the extremely varied walks that resulted.

Inter-Objectivity

I am officially the wiz at I-O for the Aperios Operating System. I’ve just completed most of the internal work to make various OPEN-R objects (our modules–Vision, Motion, Communication) talk to each other in exactly the way I want them to–which is good! The motion system now tells the Vision module (and our Brain) the current state of the motion system and the current joint values every frame. This is a basic building block for better and better behaviors.

AiboConnect and Motion Engines

I have written a protected page describing how to use aiboConnect and what all of the different joint angles are for the head and legs. In addition, I describe the head literal and the motion engines. Soon I will put up diagrams to go along with the instructions, which will simplify the descriptions considerably.

q